PSC Myths vs. Reality

The 5 most common PSC myths that are not supported by PSC Data

Click a myth to reveal what the data actually says.

MYTH #1

MYTH #2

MYTH #3

MYTH #4

MYTH #5

MYTH #1

Myth vs Reality: Detained Ships Are Not Problematic – They Are Unprepared

- The Myth

“Detained ships are problematic, old, or poorly managed. We don’t have ‘problem ships’ in our fleet. That won’t happen to us – we run a tight ship, we are a blue chip company.”

This is one of the most persistent myths in shipping. Detentions are often seen as a red flag for systemic failure – poor maintenance, weak management, or incompetent crew.

The underlying belief is: “That won’t happen to us – we run a tight ship.”

But the data tells a different story.

- Normalcy Bias - “It Won’t Happen to Us”

This bias explains the belief behind Myth #1 that detentions mainly happen to “problem ships.” In reality, PSC detentions are statistically normal across the global fleet. Operators underestimate their own exposure and assume their vessels are less likely to be targeted, creating dangerous complacency in preparation.

- The Reality

REALITY #1

Detentions are widespread and non-discriminatory

Across Port State Control regimes globally, approximately 7,000 detentions occur every 36 months, involving around 5,700 unique vessels.

This is not a marginal phenomenon. With more than 1 in 7 ocean-going commercial ships projected to face detention at least once in the coming 36 months, detentions are statistically normal – not an exception.

This includes ships of all types, ages, and management standards.

Ships will face detention at least once in 36 months

REALITY #2

Most detained ships have no previous detention record

More than 80% of ships detained within a 36-month window have only one detention in that period.

They are not repeat offenders. They are vessels with otherwise clean records – simply caught unprepared at the wrong time.

The idea of the “problem ship” is largely a myth.

The truth is: one-off detentions are the rule, not the exception.

%

Ships detained within a 36-month window have only one detention

REALITY #3

SPOT analysis proves no Manager is immune

RISK4SEA’s SPOT Matrix (Ship-Port Tier) reveals that detentions occur across all management profiles, including Top-Tier managers.

Data shows that even highly ranked operators – with low historic DPI or DER – occasionally get caught by a focused PSCI.

This is not about operator “quality” – it’s about port-specific preparation failures.

SPOT analysis, similarly to Pareto principle, proves that we may identify well in advance the 15% of the PSCIs that create the 85% of the detentions.

%

of the PSCIs create the 85% of the detentions

REALITY #4

PRL research exposes the unpredictability of PSCI exposure

The POCRA Risk Level (PRL) research proves that detentions often occur when vessels are not even “eligible” under formal PSCI targeting logic.

-

1 in 5 inspections happens outside the official open window.

-

1 in 4 detentions hits ships with closed PRL windows.

That means a vessel can be off the radar – but still detained. Eligibility offers no protection if unprepared.

Inspections happens outside the official open window

- At A Glance

Detentions have been recorded on Last 36 months

%

of Ships detained have NO other detention within the last 36 months

Ocean-Going Ships (45k in total) will be detained at least ONCE the next 36-months

Conclusion

The majority of ships detained are NOT substandard but UNPREPARED

- The Takeaway

Reality: Detained ships are not problematic – they are unprepared.

They are hit not because they are poorly managed, but because they walked into a port with:

-

The wrong preparation,

-

At the wrong time,

-

Facing the wrong focus.

This is why data-driven risk intelligence, not generic compliance, is the only sustainable strategy:

-

Port-specific readiness (via SPARC & SPOT insights)

-

Predictive targeting risk (via PRL levels)

-

Continuous benchmarking

-

Focused crew preparation

At RISK4SEA, we help managers shift from defensive thinking to proactive risk reduction. Because detention is not a sign of failure – it’s a sign of being caught unready. And that can be fixed.

MYTH #2

Myth vs Reality: In PSC, MoU Does not Matter – Port Matters

- The Myth

“We’re prepared. We check the MoU site and know the requirements. It’s all standardized. If I know the MoU rules, I’m covered anywhere in that MoU region.”

Many operators take comfort in monitoring their PSC MoU website. The logic is: “If I know the MoU rules, I’m covered anywhere in that MoU region.”

But that confidence is misplaced.

- Representativeness Bias - “Wrong Map Thinking”

This bias drives Myth #2 by encouraging operators to rely on the MoU label as a shortcut for risk. The assumption that ports within the same MoU behave similarly ignores well-documented port-to-port variability. As a result, managers navigate PSC risk using an oversimplified regional map instead of port-specific intelligence.

- The Reality

REALITY #1

There is no true MoU-wide standardization

Consider the scale and diversity:

-

Paris MoU covers 1,508 PSC stations across 25 nations.

-

Tokyo MoU spans 728 PSC stations in 19 nations.

While these MoUs coordinate principles, they do not enforce common standards of training, checklists, inspection practices, or interpretations.

What does that mean for you?

A ship inspected in one Paris MoU country faces a very different inspection approach in another. There is no guarantee of consistency even within a single MoU.

PSC stations across 25 nations in Paris MoU

REALITY #2

Even single-nation regimes show regional differences

Take the US Coast Guard (USCG) – often seen as the most unified PSC regime:

-

143 PSC stations in one country.

-

One national oversight authority.

-

One official checklist.

Yet despite that centralization, inspection patterns differ noticeably between:

-

Atlantic Coast

-

Pacific Coast

-

US Gulf

Even with identical rules and oversight, local practice varies. It’s unrealistic to expect consistency across dozens of nations in an MoU.

PSC stations in one country for USCG

REALITY #3

Even the same port, same inspectorate, is not uniform

Our analysis of ports with 100+ PSC inspections in 36 months reveals:

-

Across different ship types (Bulkers, Tankers, Containers), only 30–50% of findings are truly common.

-

The remaining findings are highly specific, reflecting inspector focus, local risk priorities, or vessel preparation gaps.

Even in the same port, predictability is limited.

%

of findings are truly common across different ship types

- At A Glance

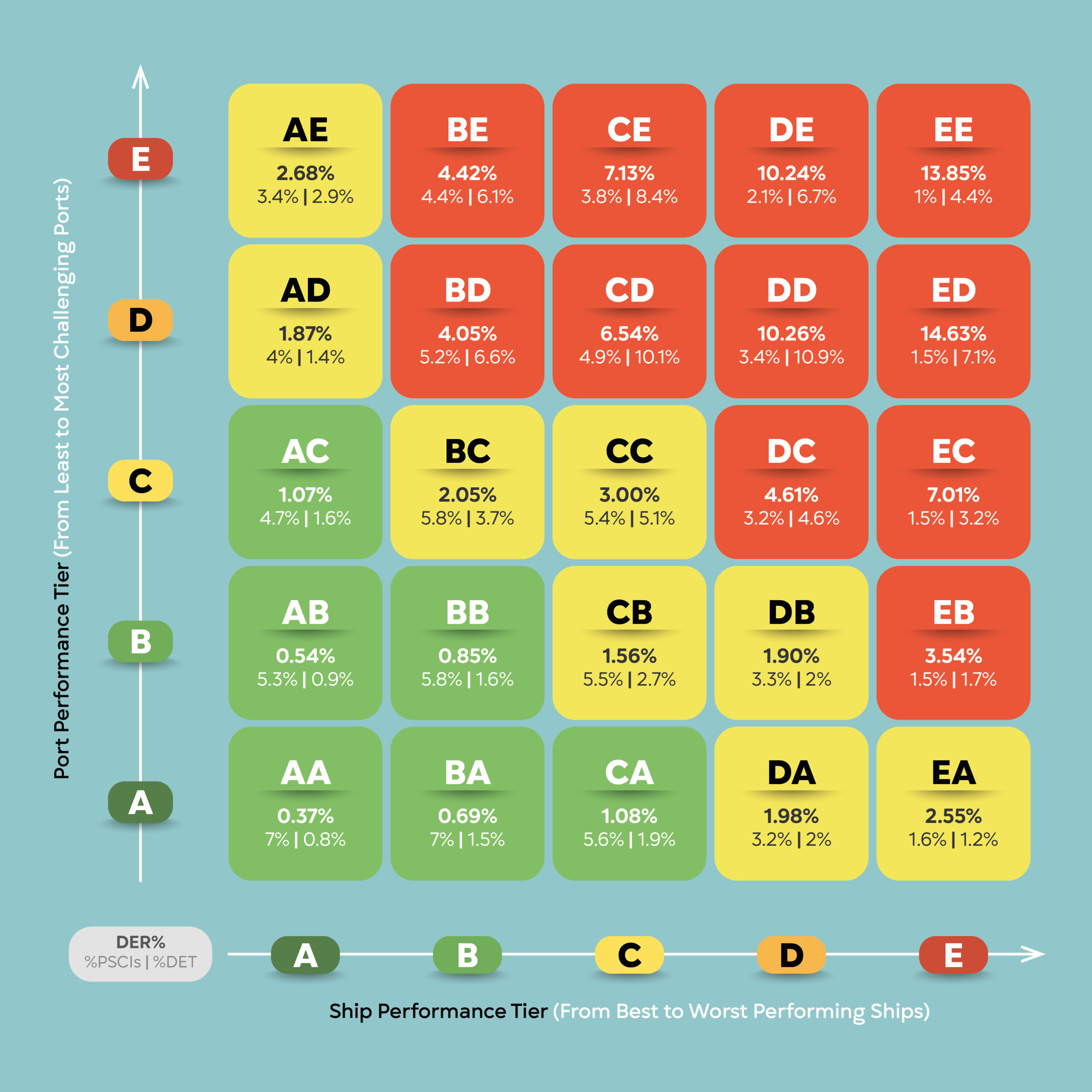

SPOT is the plot of Ship & Port Performance Tiers (from A to E) before the PSCI. It represents more than 230k PSCIs in the last 36 months of the global oceangoing fleet.

The Pareto principle revealed: The SPOT Zones marked red represent the 29% of PSCIs responsible for the 73% of the Detentions. This is actionable intelligence on where to focus attention and divert training & resources.

- The Takeaway

Reality: In PSC, MoU Does not Matter – Port & Ship combination Matters.

-

The port matters.

-

The local authority and its training matter.

-

The individual inspector matters.

Operators who rely solely on MoU-level guidance risk being blindsided by local practice.

What should managers do?

-

Shift from “MoU-level” preparation to port-level intelligence.

-

Use data analytics to predict local risk.

-

Train crews on flexibility and readiness for local variations.

At RISK4SEA, we provide detailed port-level insights to help managers prepare where it actually matters. Because in PSC, MoU does NOT matter – Port matters.

MYTH #3

Myth vs Reality: PSCI severity is more important than PSCI priority/probability

- The Myth

“PSCI Open Window and Ship Priority matter. I’ll just check the MoU site to see if my ship is eligible for inspection. If my inspection window is closed, I’m safe. I won’t be inspected.”

This is a widely held belief among ship managers and operators. The assumption is simple: “If my inspection window is closed or my priority is low, I’m safe. I won’t be inspected.”

But that assumption can be dangerously misleading.

- Oversimplification Bias - “Single-Number Comfort”

This bias sits behind Myth #3, where PSCI priority is treated as the main indicator of inspection exposure. PSC risk is multidimensional and highly context dependent, but decision-makers gravitate toward one easy metric. The result is false comfort and missed risk signals that only emerge through combined PRL and SPOT analysis.

- The Reality

REALITY #1

A large share of inspections happen outside the official “window”

Our research shows:

-

20% of PSC Inspections happen when the ship’s official PSCI window is closed.

-

25% of all detentions occur in these same supposedly “ineligible” situations.

In other words, 1 in 5 inspections and 1 in 4 detentions defy the standard eligibility logic. Relying only on “priority” and “window” status offers a false sense of security.

%

of PSC Inspections happen when official PSCI window is closed

REALITY #2

You have limited control over PSCI probability

Even if you carefully track the MoU site and your inspection window, you can’t control:

-

Random selection processes.

-

Information sharing between MoUs.

-

Intelligence-led targeting.

-

Special campaigns.

-

Port State Control Officer (PSCO) discretion.

And once a PSCO steps onboard, you can’t tell them to leave. Your “priority” level becomes irrelevant in that moment.

OUT OF

YOUR CONTROL

Random selection processes

Information sharing between MoU

Intelligence-led targeting

Special campaigns

PSCO discretion

REALITY #3

In risk management, severity always trumps probability

This is Risk Assessment 101:

-

Probability tells you how likely something is to happen.

-

Severity tells you how bad it is if it does happen.

Even if your inspection probability is low, the severity of a detention is high. Fines, delays, reputation damage, off-hire costs – the consequences can be enormous.

That’s why effective risk management focuses on severity.

RISK ASSESSMENT 101

Probability

Tells you how likely something is to happen

Severity

Tells you how bad it is if it does happen

REALITY #4

Data proves this popular myth is inaccurate

RISK4SEA’s ongoing research on PSCI Risk Levels (see: www.risk4sea.com/PRL) reveals trends that directly contradict this myth:

-

1 out of 5 PSCIs occur with closed windows.

-

1 out of 4 detentions happen outside official eligibility.

Many inspections are driven by intelligence or other unpredictable factors, not MoU-listed windows or priorities.

PSCIs occur with closed windows

- At A Glance

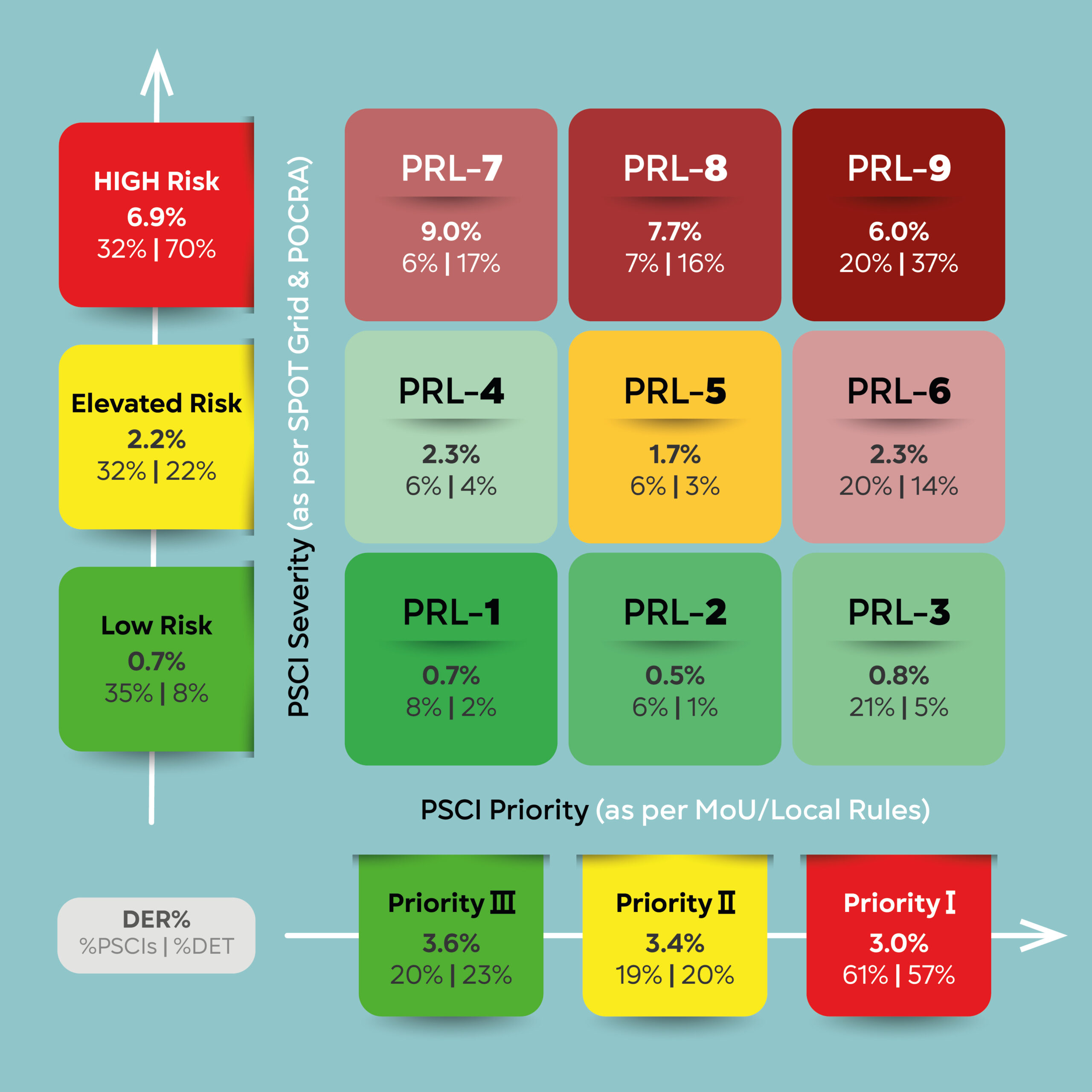

The PRL Matrix is the result of the plot of actual data of more than 230k PSCIs for the last 36 months and the results of the POCRA providing an assessment of the PSCI Probability and PSCI Severity generating a unique Risk Level.

The PSC Risk Levels (PRL) represent Risk Conditions of escalating severity (e.g. PRL 2 represents a higher PSC risk compared to PRL1 and so on, with PRL 9 being the highest Risk that anyone may face.

- The Takeaway

Reality: PSCI priority is an illusion – Ship & Port Tier Combination (SPOT) matters.

-

Recognize that PSCI Severity “eats” Probability for breakfast.

-

Focus on always-on readiness.

-

Build a robust inspection-prepared culture.

-

Use data to understand real risk, not just MoU-listed priorities.

At RISK4SEA, our mission is to replace guesswork and myths with evidence-based insights that help managers reduce real operational risk. Because in PSC, it’s not about if you’ll be inspected – it’s about how ready you are when it happens.

MYTH #4

Myth vs Reality: A Good PSC Checklist Is Not Enough Unless It Is Ship & Port Specific

- The Myth

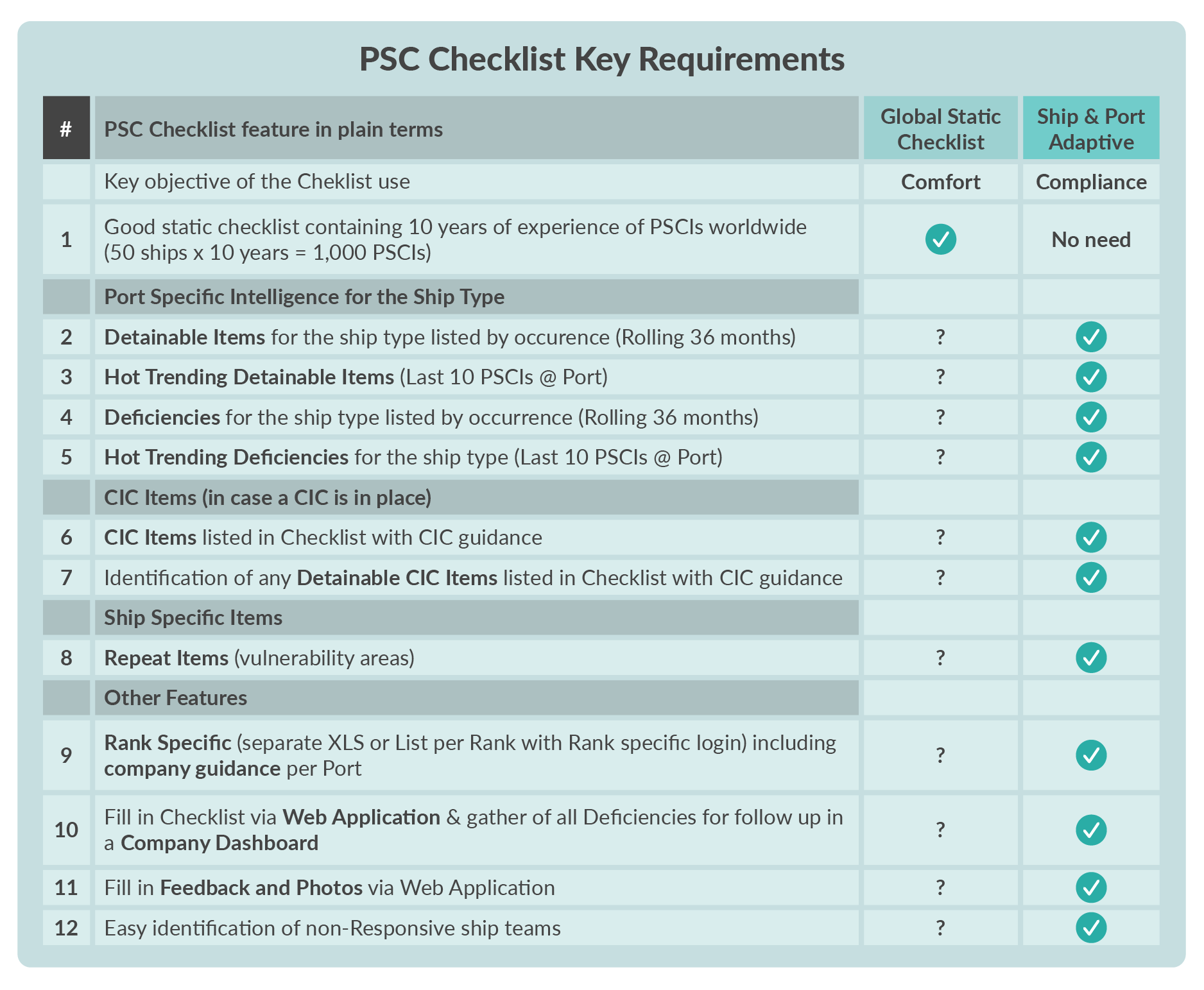

“I have a good static PSC checklist – it’s from Class, Flag, colleagues, and past experience over the last 10 years. I’ve even added a Top 20 list of detainable items. That should cover it.”

This is one of the most persistent illusions in PSC preparation. It gives crews and superintendents the comfort of structure and simplicity. But unfortunately, it also gives them false confidence.

A checklist built on global statistics or generic advice will not reflect the real risks faced in specific ports, especially when ship types, regimes, and regional enforcement patterns vary dramatically.

- Compliance Bias - “Checklist Comfort”

This bias underpins Myth #4 and reflects the industry’s box-ticking culture. Many operators equate completing a generic checklist with being PSC-ready, primarily to satisfy perceived authority expectations. In practice, static compliance does not control dynamic port risk, and true readiness requires adaptive, risk-based preparation.

- The Reality

REALITY #1

Static checklists lack critical port intelligence

Generic Topxx checklists are often seen as “safe.” But when RISK4SEA tested these against real PSCI data across high-activity ports, the result was striking:

The global Top 20 strategy missed more than half (50%) of actual detainable deficiency codes found during inspections.

-

In over 1,090 ports (86% of ports), the checklist missed more than half of the real detainable items

-

In over 120K PSCIs (91% of PSCIs), the checklist missed more than half of the real detainable items

-

In over 6K Detentions (94% of Detentions), the checklist missed more than half of the real detainable items

This means crews may diligently tick all boxes – yet remain exposed to the exact deficiencies that get ships detained in that specific port.

%

or more

of actual detainable deficiency codes were missed with a global Top 20 strategy for the 94% of Detentions

REALITY #2

Static checklists prioritize comfort over detention avoidance

Why are these static checklists still used? Because they’re easy and do not take office or ship staff out of their comfort zone.

-

They’re manageable, short, and familiar.

-

Crews can complete them, managers can review them, and the forms look clean.

But this efficiency is misleading: “The checklist is easy because it ignores the majority of items that actually lead to detention in that specific port.”

Checklist completion becomes a bureaucratic comfort – not a risk control strategy.

REALITY #3

Real life bites – checklists must be dynamic and risk-weighted

If your checklist were truly effective, detentions would vanish. But they don’t. Why?

Because real life – and real PSCI focus – varies per port, ship type, and campaign.

A RISK4SEA study confirms that each port had an average of 14 and a median (PSCI weighted) of 23 unique detainable codes, while many ports had more than 30. Detention risk is fragmented, dynamic, and local.

A static list may have worked yesterday. It will not work tomorrow.

unique detainable codes in the median port

- At A Glance

A short or fixed global checklist may reduce effort, but it does not reduce risk.

According to the white paper:

-

Global Top 20 checklists fail to align with real port-level enforcement.

-

Compliance with such checklists gives a false sense of readiness.

-

PSC inspections remain unpredictable unless preparation adapts to local patterns.

- The Takeaway

Reality: Effective PSC Checklist must be dynamic, port-specific, prioritized. It should include port-specific detainable items, by ship type, prioritized by occurrence. Comfort vs compliance is a plan to fail.

To align with real-world detention risk:

-

Start with port-specific detainable history, not global statistics.

-

Filter by ship type, as detention profiles vary widely across fleets.

-

Order by frequency, not alphabetically or arbitrarily.

Crew focus must narrow only when data justifies it. In high-variance ports, the checklist must expand – even if this adds workload. Reducing workload must not be mistaken for reducing risk.

Ship managers need to move away from legacy comfort checklists. Detentions are not avoided by familiarity – they are avoided by relevance, risk-focus, and real-time intelligence.

At RISK4SEA, we provide the tools to do exactly that. Because in PSC, a “good” checklist isn’t enough unless it knows the ship, and the port.

MYTH #5

Myth vs Reality: To Monitor PSC Performance, You Need the Port KPIs to Do It

- The Myth

“I know how my fleet performs as I monitor my own fleet deficiencies, detentions, and trends over time and I have the full picture, I know where my PSC pain points are.”

Many managers assume that because they see their fleet’s own inspection records, they know how well they are performing. They look at their ships’ deficiencies, detentions, and perhaps trends over time-and think they have the full picture.

But that assumption misses a fundamental requirement for real performance management: benchmarking.

- Aggregation Bias - “Average Comfort”

This bias explains Myth #5, where fleet-level KPIs create a misleading sense of control. High-level averages often mask port-specific risk pockets and vessel-level exposure. Managers believe they understand performance because headline metrics look acceptable, while localized PSC vulnerability remains unaddressed.

- The Reality

REALITY #1

Benchmarking is about deviation from a standard, not absolute values

The basics of benchmarking require that you compare your performance against a meaningful reference point.

In the PSC world, that means measuring your ship’s or fleet’s Key Performance Indicators (KPIs):

-

Deficiency Per Inspection (DPI) to assess deficiencies vs benchmark

-

Detention Rate (DER) to assess detentions vs benchmark

-

SMS Deficiency Rate (SDR) to assess ISM & repeat deficiencies vs benchmark

-

Predicted Deficiency Index (PDI) to assess how predictable the deficiencies were

-

Human Factors & Crewing DPI to assess relevant topic findings vs benchmark

-

Regulations & Operational Compliance DPI to assess relevant topic findings vs benchmark

-

Ship Structure & Equipment DPI to assess relevant topic findings vs benchmark

Against what?

-

The Key Performance Indicator (KPI) for the same ship type (Bulkers, Tankers, Containers, etc.)

-

In the specific port where inspections occurred

-

Over a meaningful period (e.g. the past 36 months)

Without this context, raw numbers are meaningless.

BASIC PSC KPIs

Deficiency Per Inspection

to assess deficiencies vs benchmark

Detention Rate

to assess detentions vs benchmark

SMS Deficiency Rate

to assess ISM & repeat deficiencies vs benchmark

Predicted Deficiency Index

to assess how predictable the deficiencies were

Human Factors & Crewing DPI

to assess relevant topic findings vs benchmark

Regulations & Operational Compliance DPI

to assess relevant topic findings vs benchmark

Ship Structure & Equipment DPI

to assess relevant topic findings vs benchmark

REALITY #2

Each port has its own PSC profile

Ports are not interchangeable. Inspection cultures, local risk focus, inspector training, and campaigns vary widely.

For example:

-

A DPI of 0.5 in a port with a bulk carrier average of 0.3 is underperformance.

-

The same DPI of 0.5 in a port where the average is 1.2 is excellent.

Without knowing the port-specific benchmark, you can’t tell the difference.

DPI in a port with a bulk carrier average of 0.3 is underperformance

REALITY #3

Ship type matters within each port

Even within the same port, PSC statistics differ by ship type:

-

Bulk Carriers may be targeted differently than Tankers.

-

Containers may face entirely different deficiency profiles.

A proper benchmark must match ship type within port. Generalized numbers won’t cut it.

DID YOU KNOW?

Bulk Carriers

may be targeted differently than Tankers

Containers

may face entirely different deficiency profiles

REALITY #4

You need both the average and the volume of inspections

To even create a reliable benchmark, you need:

-

The average KPI for each port and ship type.

-

The number of PSC inspections (PSCIs) for that ship type in that port.

Why? Because inspection volume gives the benchmark its weight.

-

A port with 500 bulk carrier inspections has a robust average.

-

A port with 5 inspections does not.

RELIABLE BENCHMARK

The average KPI

for each port and ship type

The number of PSCIs

for that ship type in that port

- At A Glance

Missing Port Averages Means Missing True Performance Insight

If you don’t know the Port KPI averages for each PSCI your ship undergoes, you’re missing the most basic context:

-

Is your ship better or worse than the port average?

-

Are you improving relative to where you’re actually being inspected?

-

Where are your real pain points?

Without these Port KPIs, you’re managing blind.

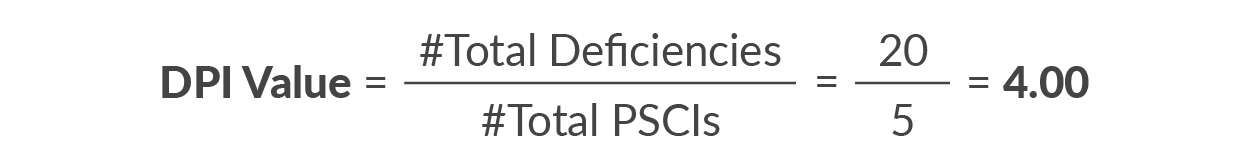

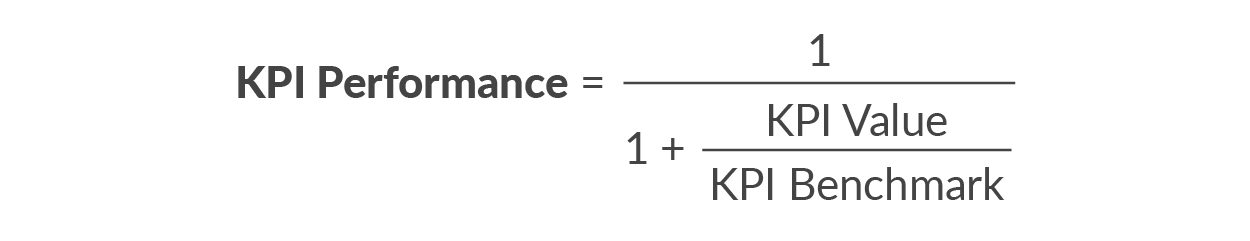

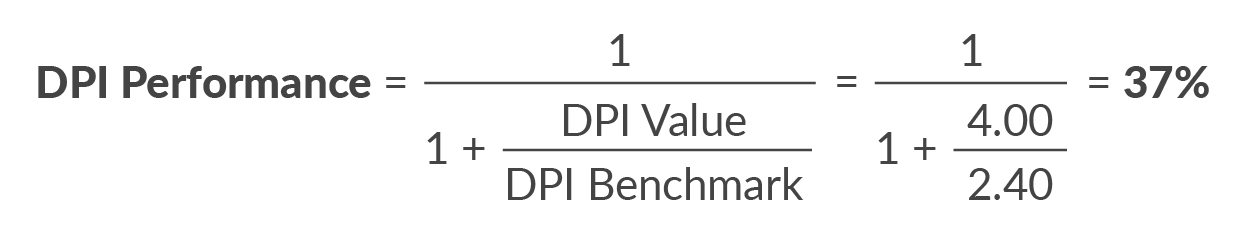

KPI Calculation Worked Example

DPI Value (Ship, Manager, Port, etc.)

Example: Deficiencies=20, PSCIs=5, DPI Value=4

Formula to be used when BEST value is either Zero or the closest to zero, to provide SCORE for 0% to 100% with 50%=Benchmark

DPI Benchmark (PSCI History)

Avg #DEFs/#PSCIs of PSCI History L36M

Example: Deficiencies=240, PSCIs=100, DPI Benchmark=2.4

SCORE from 0% to 100% indicating:

-

0% = Worst

-

50% = Average (KPI Value=KPI Benchmark)

-

100% = Best (KPI Value=Ø & KPI Benchmark >0)

- The Takeaway

Reality: PSC performance monitoring requires Port-level, ship-type-specific benchmarking.

-

Don’t just track your own DPI and DER in isolation.

-

Compare them against Port KPIs for the same ship type.

-

Use weighted averages to see where your real risks lie.